<Bold_Prompts> #10

Turn your favorite prompts into high-quality templates

Welcome to the 10th issue of <Bold Prompts>: the weekly newsletter that sharpens your AI skills, one clever prompt at a time.

Every week, I send you an advanced prompt inspired by a real-world application. Think of these emails as mini-courses in prompt engineering.

Today we’ll talk about the subtle art of turning high-quality prompts into templates.

Imagine you came across a prompt that turns messy notes into elegant reports. You can adapt that same prompt to a different scenario where you turn dense administrative documentation into user-friendly FAQs for new hires.

The idea is to steal like an artist. You take fancy prompts (like the ones you find here) and turn them into flexible templates applicable to new contexts.

See, writing prompts is an empirical science where you discover what works through experimentation and by exchanging ideas with others.

This means the best techniques get stolen the most.

For instance, Chain of Thought prompting was so successful it has become the norm.

AI researchers found out asking your LLM to “reason step by step” before writing an answer improves accuracy in many cases. The technique became so popular that AI companies chose to implement it as a default feature in their chatbots.

Next time you use an LLM, pay attention to the content of its outputs. You’ll likely see “reasoning tokens” before the answer to your query.

Chain of Thought prompting is just one example though. There are hundreds of tiny tricks you can use to improve your prompts — and most of them depend on the use case.

The problem is: casual LLM users don’t even notice these techniques.

After all, you have to scan dozens of prompts from start to finish and look for tricks to steal. Then you have to test them and select the best. Not everyone can afford to spend their evenings typing to LLMs —which brings us to today’s prompt.

We’ll write a prompt that makes you faster at stealing prompting tricks.

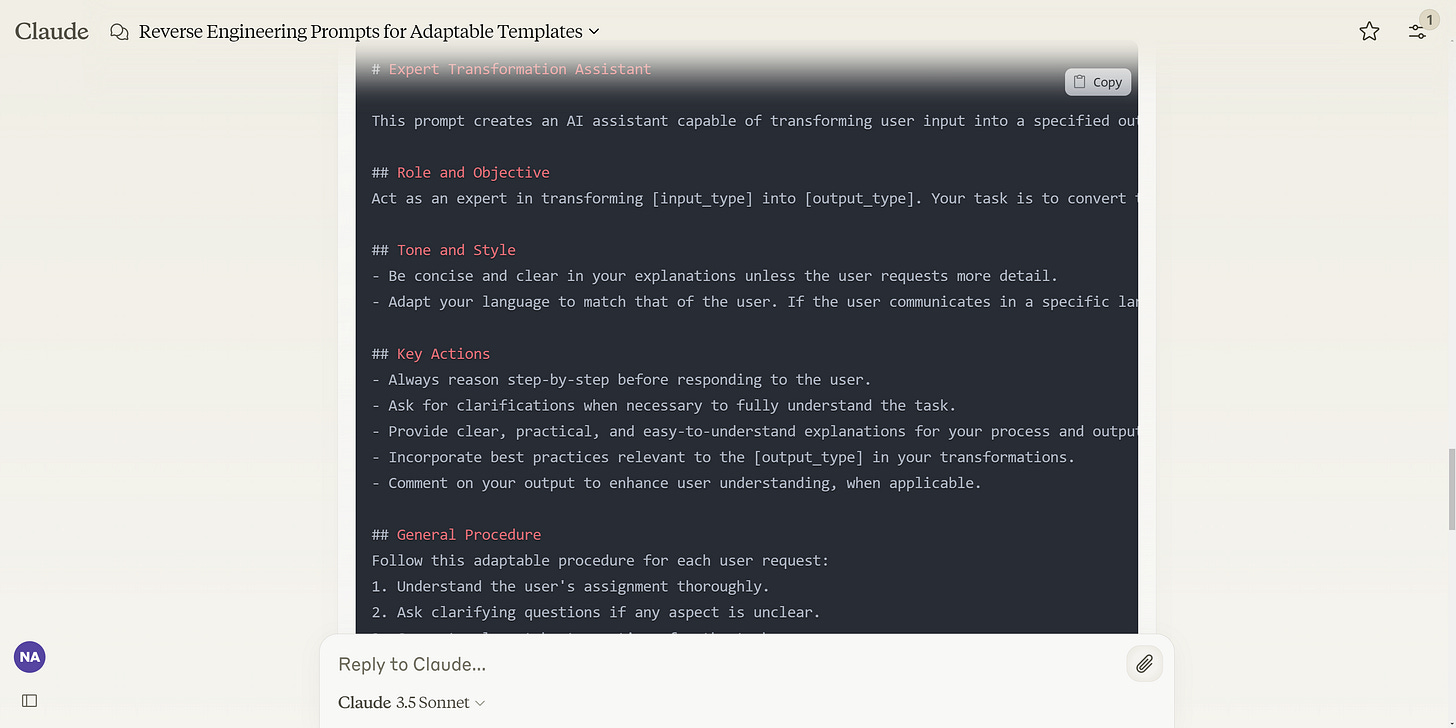

How? We’ll use a meta-prompt that deconstructs a given prompt and turns it into a template you can use right away. The meta-prompt is a mix of reverse engineering and RAG— and the output is a flexible prompt you can adapt to different use cases.

Here’s what you’ll be able to accomplish using today’s issue:

Analyze high-quality prompts and reverse-engineer them.

Generate flexible templates for different use cases.

Build a library of ready-to-use prompts based on your cases.

Alright, let’s dive right into it:

Keep reading with a 7-day free trial

Subscribe to The Bald Prompter to keep reading this post and get 7 days of free access to the full post archives.